Alex and I went to this, and we were both not quite sure what to make of it. The band’s idea behind the Decomposition Theory project is to fuse algorithmically generated music with a live performance (“custom-made procedural audio processes, generative music programs, and live-coded noise”). The first two tracks they played were promising, featuring heavy beats that moved the crowd, but after that it moved much more into…bleep-bloops and noise. Alex and I both came out of it quite confused. What parts were algorithmically generated, and what parts were being performed at the time? How much of the performance was algorthmically generated before the performance, as opposed to being randomly generated live on stage each night? How much of the music was a deliberately curated statement of human art, and how much did they let the machines get the better of them?

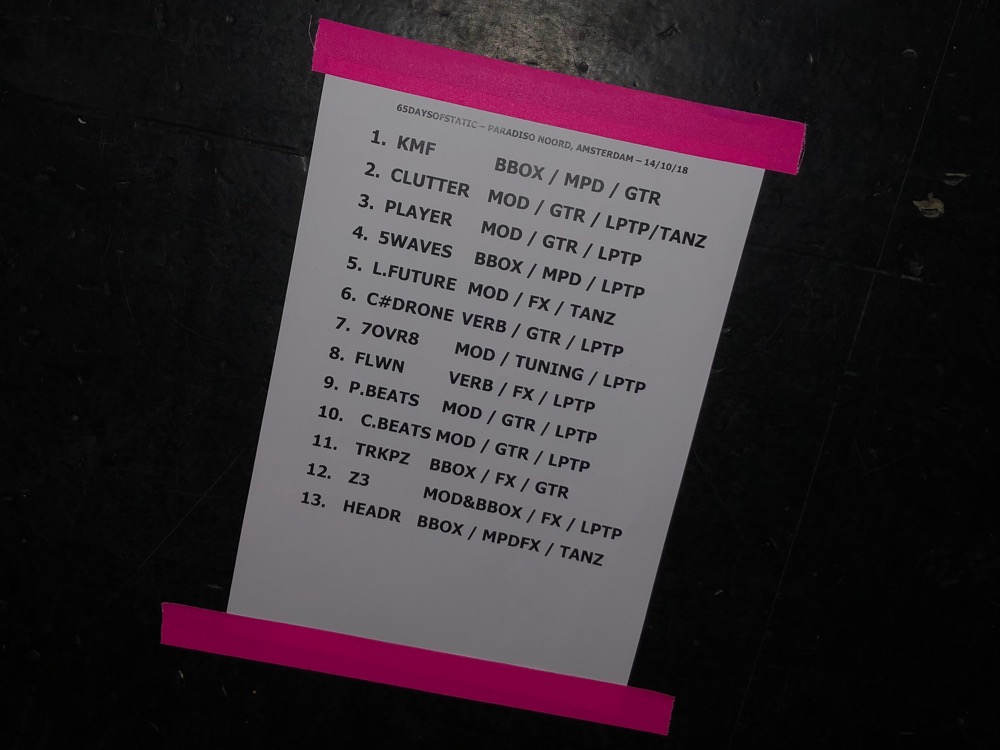

I enjoy experimental and generated music, but there was so much here that I didn’t appreciate because I didn’t understand it. Up on stage Paul Wolinski, Rob Jones, and Simon Wright (Joe Shrewsbury wasn’t present) were banging along on synths, guitars, and noise generators, and really getting into it. In the crowd, fans were swaying along to beats I couldn’t follow. I wish they had a booklet to go with the show, because I feel like I was missing something.

(So was the guy who kept shouting out “Radio Protector!” after the band finished each piece. I hope he hadn’t been expecting a “best of” concert.)